Writing blog post how I managed to configure multi module JavaScript project with Grunt for my spare time project. It is using Protractor for end-to-end testing, but I believe that this multi module approach would be easily portable onto non-Angular stack.

In my spare time I work on pet project based on EAN stack (Express, Angular, Node.JS). (Project doesn’t need DB, that’s why MongoDB is missing from famous MEAN stack). Initial draft of the project was scaffolded by Yeoman with usage of angular-fullstack generator. Build is based on Grunt. Apart from that generator was using Grunt, I chose it over Gulp, because it would be probably more mature. Also Grunt vs Gulp battle seem to me similar as Maven vs Gradle one in Java world. I never had a need to move away from Maven. Also don’t like idea of creating some custom algorithms in build system (bad Bash and Ant experience in the past). Grunt is similar to Maven in terms of configuration approach. I can very easily understand any build in Maven and expect similar build consistency from Grunt.

Nearly immediately I started to feel that Node.JS and Express back-end build concerns (Mocha based test suite) are pretty different to concerns of Angular front-end build (minification, Require.js optimalization, Karma based test suite, …). There was clear distinction between these two.

My main problem was having separate test suites. Karma makes generation of unit test code coverage very easy. Slightly tricky was setting up generation of code coverage stats for Mocha based server unit test suite. I managed to do that with Instanbul. So far so good. But when I wanted to send my stats to Coveralls server I could do that only for one suite. Coveralls support one stat per project. Combining stat files didn’t work nicely for me.

Multi module JavaScript project

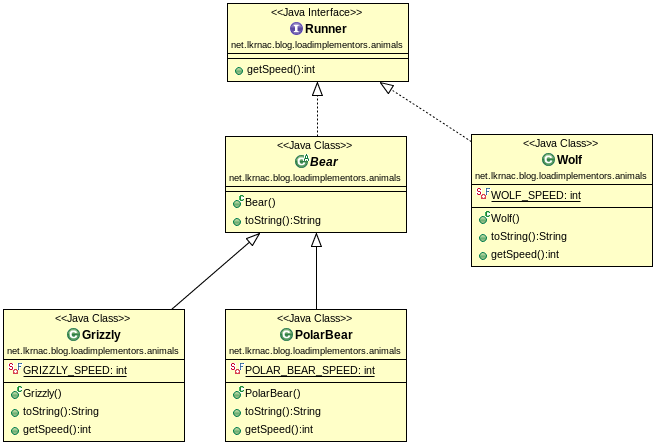

So I felt a need for splitting the projects. As I’m developer with Java background, this situation reminded me Maven multi module project. In this concept you can have various separated projects/sub-modules that can evolve independently. These can be grouped/integrated together via special multi module project. This way you can build large enterprise and also modular application.

So I said to myself, that I wouldn’t give a try to this stack until I figure out how to configure multi module project. I separated main repository called primediser into two:

(Notice I created branch blog-2014-05-19-multi-module-project to to have code consistent with blog post)

So now I am able to set up Continuous Integration for each project and submit coverage stats separately. But how to integrate these two together? I created umbrella project, that doesn’t contain any JavaScript production code (similar to multi module project in Maven world). It will contain only Protractor E2E tests and grunt file for integration two modules. This project is located in separate Github repository called primediser.

It uses various Grunt plugins and one conditional trick to do the integration:

grunt-git

This plugin is used to clone mentioned sub-projects from Github:

gitclone: {

cloneServer: {

options: {

repository: 'https://github.com/lkrnac/<%= dirs.server %>',

directory: '<%= dirs.server %>'

},

},

cloneClient: {

options: {

repository: 'https://github.com/lkrnac/<%= dirs.client %>',

directory: '<%= dirs.client %>'

},

},

},I could use grunt-shell plugin for this (I am using it anyway if you read further), but this one seems to be platform independent. Grunt-shell obviously isn’t.

Conditional cloning

Git can clone repository only once. Second attempt fails. Therefore we need to clone sub-projects only when they don’t exist. It is obviously up to developer to

var cloneIfMissing = function (subTask) {

var directory = grunt.config.get('gitclone')[subTask].options.directory;

var exists = fs.existsSync(directory);

if (!exists) {

grunt.task.run('gitclone:' + subTask);

}

};

grunt.registerTask('cloneSubprojects', function () {

cloneIfMissing('cloneClient');

cloneIfMissing('cloneServer');

});My setup expects that developer would update sub-projects as needed. Also expects that Continuous Integration system that throws away entire workspace after the build. If you would be using Jenkins, you could use similar conditional trick in conjunction with gitupdate maven task that grunt-git provides.

grunt-shell

After cloning, we need to install dependencies for both sub-projects. Unfortunately I didn’t find any platform independent way of doing this (Have to be honest I didn’t look very deeply though).

shell: {

npmInstallServer: {

options: {

stdout: true,

stderr: true

},

command: 'cd <%= dirs.server %> && npm install && cd ..'

},

npmInstallClient: {

options: {

stdout: true,

stderr: true

},

command: 'cd <%= dirs.client %> && npm install && bower install && cd ..'

}

},grunt-hub

Next step is to kick off builds of sub-projects via grunt-hub plugin:

hub: {

client: {

src: ['<%= dirs.client %>/Gruntfile.js'],

tasks: ['build'],

},

server: {

src: ['<%= dirs.server %>/Gruntfile.js'],

tasks: ['build'],

},

},Tasks configurations

grunt.registerTask('npmInstallSubprojects', [

'shell:npmInstallServer',

'shell:npmInstallClient'

]);

grunt.registerTask('buildSubprojects', [

'hub:server',

'hub:client'

]);

grunt.registerTask('coverage', [

'clean:coverageE2E',

'copy:coverageStatic',

'instrument',

'copy:coverageJsServer',

'copy:coverageJsClient',

'express:coverageE2E',

'protractor_coverage:chrome',

'makeReport',

'express:coverageE2E:stop'

]);

grunt.registerTask('default', [

'cloneSubprojects',

'npmInstallSubprojects',

'buildSubprojects',

//'build',

'coverage'

]);As you can see there is one task I didn’t mention called coverage:

- This one gatheres builds of sub-projects into dedicated sub-direcotory

- Instrument the files

- Run the Express back-end

- Kicks Protractor end to end tests

- and measures front end test coverage

Main driver in this task is grunt-protractor-coverage plugin. I already wrote blog post about this plugin. That blog post was done at stage when there wasn’t multi module configuration in place, so you can expect differences (There is also branch dedicated for that blog post also). Backbone should be the same though.